Drawing On The iPhone Canvas With jQuery And ColdFusion

The HTML Canvas element is something that I've known about for a long time but never actually looked at until yesterday. The Canvas element is just what it sounds like - a surface on which we can programmatically render graphics and shapes. After seeing some really cool canvas-based demos floating around on Twitter, I decided that it was finally time to see what this web-2.0 element could do.

To make my research a bit more exciting (not that the canvas isn't exciting enough on its own), I thought I would play around with the canvas element in the context of my iPhone's webkit browser. In this way, not only could I learn about the canvas, I could also learn more about the iPhone's unique touch-based events (touchstart, touchmove, touchend) used in gesturing. The idea was simple - use your finger as a brush and touch the iPhone's screen to start "finger painting" on the canvas.

Before I could start coding this jQuery experiment, I needed to learn something about the iPhone's touch events. Unlike standard browsers, the iPhone can track multiple, simultaneous touch events. Luckily, I found a great summary over on SitePen about how these events are managed in the browser. Because these events are so unique, they are not captured as part of the standard jQuery event object (at least not that I could find). As such, they have to be gotten directly from the window object. And while the iPhone browser can track multiple touch events, for simplicity's sake, I'm only going to take the first available touch event into account.

For ease of testing, I wanted to be able to access this jQuery canvas experiment on my local computer (such that I didn't have to keep uploading it to a live server). To do so, I had to account for both the iPhone's touch events as well as the standard browser's mouse events. That's why, in the code below, you'll see that I am testing the navigator for its user agent and then subsequently, using that test as a way to bind alternative event types (touch vs. mouse).

As exciting as it was to use jQuery and the canvas element in the context of the iPhone, I wanted to take this little menage-a-trois and turn it into a full-on orgy of technologies. After using my finger to draw on the screen, I wanted to then be able to post the drawing coordinates to the server where I could use ColdFusion to create a PNG/JPG of my canvas.

Here is the client-side code with the jQuery and canvas interaction (if you want to try this for yourself - regular browser or iPhone - click here):

<!DOCTYPE HTML>

<html>

<head>

<title>iPhone Touch Events With jQuery</title>

<meta

name="viewport"

content="width=device-width, user-scalable=no, initial-scale=1"

/>

<style type="text/css">

body {

margin: 5px 5px 5px 5px ;

padding: 0px 0px 0px 0px ;

}

canvas {

border: 1px solid #999999 ;

-webkit-touch-callout: none ;

-webkit-user-select: none ;

}

a {

background-color: #CCCCCC ;

border: 1px solid #999999 ;

color: #333333 ;

display: block ;

height: 40px ;

line-height: 40px ;

text-align: center ;

text-decoration: none ;

}

</style>

<script type="text/javascript" src="jquery-1.4.2.min.js"></script>

<script type="text/javascript">

// When the window has loaded, scroll to the top of the

// visible document.

jQuery( window ).load(

function(){

// When scrolling the document, using a timeout to

// create a slight delay seems to be necessary.

// NOTE: For the iPhone, the window has a native

// method, scrollTo().

setTimeout(

function(){

window.scrollTo( 0, 0 );

},

50

);

}

);

// When The DOM loads, initialize the scripts.

jQuery(function( $ ){

// Get a refernce to the canvase.

var canvas = $( "canvas" );

// Get a reference to our form.

var form = $( "form" );

// Get a reference to our form commands input; this

// is where we will need to save each command.

var commands = form.find( "input[ name = 'commands' ]" );

// Get a reference to the export link.

var exportGraphic = $( "a" );

// Get the rendering context for the canvas (curently,

// 2D is the only one available). We will use this

// rendering context to perform the actually drawing.

var pen = canvas[ 0 ].getContext( "2d" );

// Create a variable to hold the last point of contact

// for the pen (so that we can draw FROM-TO lines).

var lastPenPoint = null;

// This is a flag to determine if we using an iPhone.

// If not, we want to use the mouse commands, not the

// the touch commands.

var isIPhone = (new RegExp( "iPhone", "i" )).test(

navigator.userAgent

);

// ---------------------------------------------- //

// ---------------------------------------------- //

// Create a utility function that simply adds the given

// command to the form input.

var addCommand = function( command ){

// Append the command as a list item.

commands.val( commands.val() + ";" + command );

};

// I take the event X,Y and translate it into a local

// coordinate system for the canvas.

var getCanvasLocalCoordinates = function( pageX, pageY ){

// Get the position of the canvas.

var position = canvas.offset();

// Translate the X/Y to the canvas element.

return({

x: (pageX - position.left),

y: (pageY - position.top)

});

};

// I get appropriate event object based on the client

// environment.

var getTouchEvent = function( event ){

// Check to see if we are in the iPhont. If so,

// grab the native touch event. By its nature,

// the iPhone tracks multiple touch points; but,

// to keep this demo simple, just grab the first

// available touch event.

return(

isIPhone ?

window.event.targetTouches[ 0 ] :

event

);

};

// I handle the touch start event. With this event,

// we will be starting a new line.

var onTouchStart = function( event ){

// Get the native touch event.

var touch = getTouchEvent( event );

// Get the local position of the touch event

// (taking into account scrolling and offset).

var localPosition = getCanvasLocalCoordinates(

touch.pageX,

touch.pageY

);

// Store the last pen point based on touch.

lastPenPoint = {

x: localPosition.x,

y: localPosition.y

};

// Since we are starting a new line, let's move

// the pen to the new point and beign a path.

pen.beginPath();

pen.moveTo( lastPenPoint.x, lastPenPoint.y );

// Add the command to the form for server-side

// image rendering.

addCommand(

"start:" +

(lastPenPoint.x + "," + lastPenPoint.y)

);

// Now that we have initiated a line, we need to

// bind the touch/mouse event listeners.

canvas.bind(

(isIPhone ? "touchmove" : "mousemove"),

onTouchMove

);

// Bind the touch/mouse end events so we know

// when to end the line.

canvas.bind(

(isIPhone ? "touchend" : "mouseup"),

onTouchEnd

);

};

// I handle the touch move event. With this event, we

// will be drawing a line from the previous point to

// the current point.

var onTouchMove = function( event ){

// Get the native touch event.

var touch = getTouchEvent( event );

// Get the local position of the touch event

// (taking into account scrolling and offset).

var localPosition = getCanvasLocalCoordinates(

touch.pageX,

touch.pageY

);

// Store the last pen point based on touch.

lastPenPoint = {

x: localPosition.x,

y: localPosition.y

};

// Draw a line from the last pen point to the

// current touch point.

pen.lineTo( lastPenPoint.x, lastPenPoint.y );

// Render the line.

pen.stroke();

// Add the command to the form for server-side

// image rendering.

addCommand(

"lineTo:" +

(lastPenPoint.x + "," + lastPenPoint.y)

);

};

// I handle the touch end event. Here, we are basically

// just unbinding the move event listeners.

var onTouchEnd = function( event ){

// Unbind event listeners.

canvas.unbind(

(isIPhone ? "touchmove" : "mousemove")

);

// Unbind event listeners.

canvas.unbind(

(isIPhone ? "touchend" : "mouseup")

);

};

// ---------------------------------------------- //

// ---------------------------------------------- //

// Bind the export link to simply submit the form.

exportGraphic.click(

function( event ){

// Prevent the default behavior.

event.preventDefault();

// Submit the form.

form.submit();

}

);

// Bind the touch start event to the canvas. With

// this event, we will be starting a new line. The

// touch event is NOT part of the jQuery event object.

// We have to get the Touch even from the native

// window object.

canvas.bind(

(isIPhone ? "touchstart" : "mousedown"),

function( event ){

// Pass this event off to the primary event

// handler.

onTouchStart( event );

// Return FALSE to prevent the default behavior

// of the touch event (scroll / gesture) since

// we only want this to perform a drawing

// operation on the canvas.

return( false );

}

);

});

</script>

</head>

<body>

<!--- This is where we draw. --->

<canvas

id="canvas"

width="308"

height="358">

</canvas>

<!---

This is the form that will post the drawing information

back to the server.

--->

<form action="export.cfm" method="post">

<!--- The canvas dimensions. --->

<input type="hidden" name="width" value="308" />

<input type="hidden" name="height" value="358" />

<!--- The drawing commands. --->

<input type="hidden" name="commands" value="" />

<!--- This is the export feature. --->

<a href="#">Export Graphic</a>

</form>

</body>

</html>

The concept behind this code is actually quite straightforward: every time the user clicks or touches down on the canvas, record that initial point of contact; then, as the user moves around on the canvas, draw a line from the previous point to the current point. As long as enough mouse or touch events are recorded during the movement, the stroke will be composed of enough small lines to appear smooth and well-arced.

Because the iPhone's touch events are not part of the core jQuery event object, I had to factor out the retrieval of the event data into its own function. In this way, I could easily grab the first available touch event (or mouse event) without having to worry about which browser context I was in.

Since this is my first time playing with the canvas object, I don't want to give too much explanation as to how it works (for fear of offering misinformation). But, as you can in my code, I am using just a few basic commands - beginPath(), moveTo(), lineTo(), and stroke(). The canvas seems to allow the collection of rendering commands before the shape is actually rendered. The final call to stroke() causes all actions invoked after beginPath() to be rendered to the canvas.

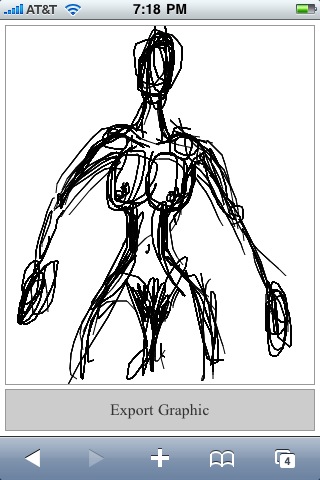

When I open the above demo page on my iPhone, this is the screen that I get:

When I touch the canvas, I initiate the drawing of lines. After some fun testing, here's what I was able to produce (reminiscent of my studio art days in college):

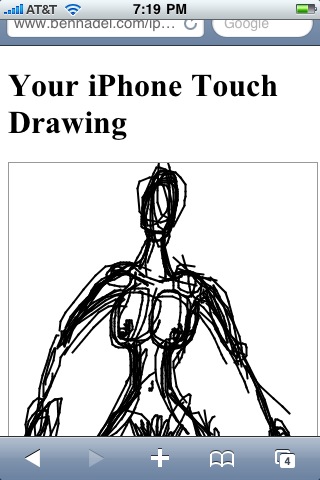

Each drawing command is both rendered on the canvas and recorded to a hidden form element. Once I finished my drawing, I clicked the "Export Graphic" button. This posted the form, along with my series of "start" and "lineTo" drawing commands, to the ColdFusion server where I could then use ColdFusion's image functionality to re-create a PNG of the canvas:

The ColdFusion code behind this server-side rendering simply consists of a series of imageDrawLine() function calls:

Export.cfm

<!--- Param the form commands value. --->

<cfparam name="form.commands" type="string" default="" />

<!--- Param the dimensions of the canvas. --->

<cfparam name="form.width" type="numeric" default="320" />

<cfparam name="form.height" type="numeric" default="480" />

<!--- Create a new ColdFusion image with the given dimensions. --->

<cfset image = imageNew(

"",

form.width,

form.height,

"rgb",

"##FFFFFF"

) />

<!--- Turn on anti-aliasing for smoother line rendering. --->

<cfset imageSetAntiAliasing(

image,

"on"

) />

<!--- Set the drawing color. --->

<cfset imageSetDrawingColor(

image,

"##000000"

) />

<!--- Define the drawing attributes. --->

<cfset penStyle = {

width = 2,

endcaps = "round"

} />

<!--- Set the pen style. --->

<cfset imageSetDrawingStroke(

image,

penStyle

) />

<!--- Set the default point to 0,0. --->

<cfset lastPenPoint = {

x = 0,

y = 0

} />

<!---

Loop over the commands in the form to draw on the image.

We are looking for two different types of commands:

- Start: X,Y

- LineTo: X,Y

--->

<cfloop

index="command"

list="#form.commands#"

delimiters=";">

<!--- Get the command type. --->

<cfset commandType = listFirst( command, ":" ) />

<!---

Get the coordinates of the command. We are going to split

the command coordinates into an array for easier

reference of X/Y values.

--->

<cfset coordinates = listToArray(

listLast( command, ":" )

) />

<!--- Check to see what the command type is. --->

<cfif (commandType eq "start")>

<!---

We are starting a new line. When starting a new line,

we don't actually have to draw - we simply have to

store this point as the last pen point.

--->

<cfset lastPenPoint = {

x = coordinates[ 1 ],

y = coordinates[ 2 ]

} />

<cfelse>

<!--- We are simply continuing the last line. --->

<cfset imageDrawLine(

image,

lastPenPoint.x,

lastPenPoint.y,

coordinates[ 1 ],

coordinates[ 2 ]

) />

<!--- Store the last pen point. --->

<cfset lastPenPoint = {

x = coordinates[ 1 ],

y = coordinates[ 2 ]

} />

</cfif>

</cfloop>

<!---

Now that we have drawn the image, write it to the browser

as a PNG file.

--->

<!DOCTYPE HTML>

<html>

<head>

<title>iPhone Touch Events With jQuery</title>

<meta

name="viewport"

content="width=device-width, initial-scale=1"

/>

</head>

<body>

<h1>

Your iPhone Touch Drawing

</h1>

<p>

<cfimage

action="writetobrowser"

source="#image#"

style="border: 1px solid ##999999 ;"

/>

</p>

<p>

<a href="./index.cfm">Draw Another Image</a>

</p>

</body>

</html>

Once ColdFusion has created the image, I then use the CFFileServlet ("writeToBrowser" action) to write it as a temporary PNG to the browser. This gives me the ability to then save the rendered image to my iPhone if so desired:

All in all, this was just a really fun experiment for me; not only did I get to try out the HTML canvas element for the first time, I got to learn more about the iPhone's touch events; and, of course, pulling ColdFusion into the picture (no pun intended) just made it all the more sweet!

Want to use code from this post? Check out the license.

Reader Comments

You are a fine artist my friend. Great post also!

@JAlpino,

Thank you kindly - I come from an artistic family :)

On a practical note, you could use this code as a way of adding signatures to an iPhone mobile app. Use the canvas element as the "Sign Here" box and use the CF code to save the signature as an image.

In a production application, you might want the ability to turn drawing mode on/off, so the user can scroll/zoom without actually drawing (obviously, I'm thinking about the iPhone)

@Dan,

I actually have an idea on a fun little project that might use it for just this concept.

@Ryan,

Yeah, definitely a good call.

On a side note, does anyone know if the Android phones have the "touch" events? Or is that just iPhone?

Never thought I might actually be of technical assistance, but this seems to answer the question:

http://news.cnet.com/google-shows-touchy-feely-android-phone/

You owe me a cookie. ;)

Wow, I am speechless. Man, I want to be Ben Nadel when I grow up.

You take three of my favorite things: Art, Coldfusion, jQuery and make a kick ass demo/app.

You, sir, are my hero. :-D

@Jyoseph,

Ha ha, thanks :)

Simply awesome. Saved my day.

@Jorge,

Sweeeet.

Hi Nadel,

This is really nice. I had the same Idea of image creation on the Java Side but I used toDataURL - toDataURL does not work on the iPhone?

@Venkat,

Are you referring to the base64-encoded data URLs for images? I think that should work in a lot of the modern browsers, including the iPhone.

Or, are you asking me *why* I didn't use data urls?

@Ben Nadel,

Thanks Ben for replying on that. Yes my question is why you have not used "base64-encoded data URLs for images" - I am curious that it might have some quirks which I do not know about.

Thanks

Venkat

@Venkat,

Using ColdFusion to create a temp image on the server just seemed like the easiest solution. ColdFusion *can* convert images to base64 encoding, but it has to do so as a file (ie. it writes the base64 encoded data to a file rather than returning it as a string). As such, I would have to convert it to base64, then read in the file back. Just another step as far as this experiment goes.

Also, there might be something to the fact that base64 encoded strings are served up as part of the master page being downloaded. If you have a linked image, the browser should be able to download it in parallel to the current page, which may allow the ultimate page processing to move along faster.

Now, in this post:

www.bennadel.com/blog/1872-Using-Base64-Canvas-Data-In-jQuery-To-Create-ColdFusion-Images.htm

... I am actually using the canvas object to convert the image data to base64 before posting it to the server. I don't think this is what you were talking about - but, I did use base64 where I felt that it did make the job easier.

Hi Ben,

I've been investigating canvas for an iPad game and tried your demo but it appears it doesn't work on here. You can't seem to stop the window scrolling or register any touch events on the canvas surface.

I wondered if you've tried this out yet? Could be a major pain for canvas apps. Seems it might need to act like FP 10.1... a double tap to give the element focus would work nicely here.

Scrap that, I was still looking for the iPhone user agent, works a treat when you also look for "iPad". Thanks for this example.

@Richard,

I have not played on the iPad yet; but as you figured, I would guess you need to add the user agent (otherwise it tries to use the mouse events, not the touch events).

I haven't played with this in a while. I have some ideas to play around with.

Great work. Do you know if there is another way to export the image besides coldfusion??

@Michael,

Thanks my man. As far as exporting, I am pretty sure that you can get the Base64-encoded data from the Canvas; but, I am not sure if that is fully supported cross-browser. Of course, once you have the encoded string, you still need to put it somewhere (local storage perhaps, or post it to the server).

Awesome post!

Do you know how to change the color of the line from being black?

Thanks,

Luke

sorry, just to clarify... of the line while you're drawing on the canvas

@Lucas,

Yeah, you just need to set the strokeStyle on the drawing context before you draw the line. For more information, check out the Mozilla docs:

https://developer.mozilla.org/En/Canvas_tutorial/Applying_styles_and_colors

Thx Ben,

great post!!!!!!

it helped me so much

Alon.

Hi Ben,

Best wishes for 2011!

Thanks for this great tutorial. My hosting does not serve coldfusion. Would you know how to do this with php?

Best regards from the Netherlands!

@Alon,

Awesome my friend :)

@Hans,

I don't know much about PHP, but you might be better looking at this post:

www.bennadel.com/blog/1872-Using-Base64-Canvas-Data-In-jQuery-To-Create-ColdFusion-Images.htm

This does the same thing; except, rather than passing commands to the processing page, it passes base64 encoded image data. From what I remember, PHP has base64 encode/decode functionality that you can probably use to convert the base64 data to a binary image.

Sorry I couldn't be more specific.

Hi Ben!

Thx for this nice tutorial!

I tried to run it on my Eclipse/BlackBerry Torch simulator dev platform but it didn't work...i can't see the drawing lines.I tried your demo on the net and it worked.

I'm trying first to draw and i will try exporting after (will try with .Net platform or PHP,will see)

Could you guide me somewhere from where i am?

Thanx a lot.

Best Regards from France.

Hi,

I tried the web link you gave on a physical BB Torch device and it works;i touched the capacity screen with my finger and i saw lines,so i believe if i find a pen which works with BB Torch your code will work as needed.

I then assume i should consider testing your code with a local web page but on a physical device.I think RIM will ask me to sign the "app" before being able to load it on the physical device (20$).

By the way does somebody knows where i can find a pen which fits with Balckberry devices?

Thanx a lot.

TGIF!...anyway i stay tuned!

I did not mentioned,i'm a newbie,so i apology 'cause i'm just asking questions,not providing solutions yet...

Would I be able to do this same thing and determine if the user had drawn over a specific area? I have in mind children tracing.

Hi.

This code is working fine for Iphone but when checked in android simulator its not working the usual way.

Can any one suggest me how to handle this code as part of android and blackberry.

Thanks in advance.

@Steven,

I can't help with that. I only have an iPhone to test with. I am not sure how the touch-events might differ from phone to phone. Sorry.

@D,

Once you draw on the canvas, you should be able to determine the data at any given pixel. I haven't done that specifically, but that *must* be possible. I would be shocked if it wasn't. According to the Mozilla docs, it looks like pixel data can be accessed as some sort of an array:

https://developer.mozilla.org/En/HTML/Canvas/Pixel_manipulation_with_canvas

@AfroLoGeek,

Glad you got something working. I haven't ever tried this on any device except the iPhone and the desktop browser.

Thanks for the reply Ben.

The problem is with canvas.toDataURL("image/png") which for iPhone works fine returning the data.

When the same function is used for Android it returns "data:,".So I'm not getting the signed data.

If screen has broken paths (a damaged line and so on), does it affect the scribbling?

Can i combine a photo with a drawing?

@Steven,

Hmmm, sorry - since the Canvas stuff is so relatively new as far as JavaScript APIs go, there's bound to be cross-browser bugs.

@Steve R.,

I don't know off-hand how moving off-and-back-on the canvas will affect the drawing. Since it's based on mouse/touch-movement, it should do *something* when to re-enter the canvas area, perhaps drawing a line from where to exited, straight to where you entered.

As far as photos, the canvas API definitely allows you to programmatically paste images onto the canvas surface. I have not done this myself, however.

Thanks for this Ben. I needed info on a canvas drawing app that would work on iPad and I planned to save the image using ColdFusion. Imagine my grin when I stumbled upon all of it in one post. Thank you!

Thanks for this Ben. I needed info on a canvas drawing app that would work on iPad and I planned to save the image using ColdFusion. Imagine my grin when I stumbled upon all of it in one post. Thank you!

I too was looking for a signature style app and how to write the output to a CFImage. Thanks Ben! BTW, for anyone who's interested, I used Thomas Bradley's awesome signature jQuery plugin:

http://thomasjbradley.ca/lab/signature-pad

@Richard,

I am pretty new to mobile development. can you please tell me how did you customise this script for the iPad .

@Ben

Can you please tell me if you have a script for exporting the image in ASP.NET?

thanks

Hi Ben,

I tried your code on iphone simulater (by [webView loadHtmlString:baseURL]), but when I used mouse to simulate touch, it doesn't work - no drawing.

Does my webView require any other event hookup? I just started to play with xcode a few days ago and so I'm new.

Thanks,

Shawn

I can't remember being more impressed.

Wow.

I am going to go see what else you've done.

All the best!

Excellent work.

Really.

Also, I found out that this works also on Android phones (that is: on almost every portable devices ...!!!), just by modifying the flag isIPhone (the regular expression to check the browser signature) adding the word "Android" :

E.G.

var isIPhone = ( ((new RegExp( "iPhone", "i" )).test(navigator.userAgent)) || ((new RegExp( "Android", "i" )).test(navigator.userAgent)) ) ;

Of course, the flag could be renamed something like "isMobileDevice" ...

Thanks,

Enrico

Are you able to export the image via PHP?

how can erase or make undo please ?

thanks so much , very useful

I was able to use PHP to save images. Check out my example - http://v1k.me/paint/

I am reading some posts posted here. They are great in writing style.

http://www.runleo.com